Create table redshift1/13/2024

Infrastructure / code may (will) need to be addressed as well but first things first. So I'd say that you, firstly, have a data lineage issue and that this needs to be addressed before looking at infrastructure. This can be done for only later phases if there is an advantage to that - common phase 0 and 1 but split phase 2 and 3. Now if your database has multiple independent data domains (dependency trees) you can break these apart and run them independently but be careful to ensure that things are independent. As data grows the impact of inefficiencies grows and new issues will arise. Once you have this linear flow defined you can see which SQL statements are the long tent poles for each phase and attack any performance issues in these statements. Every update SQL of each phase can run in parallel as all these SQL statements have already updated input tables (either N, or N - 1 versions). This will also address any performance issues arising from locks and stalls. Assuming the users table has only three columns: firstname, lastname, and email, and in that order INSERT INTO. If you have 10 columns, you have to specify 10 values and they have to be in order how the table was defined. Removing the loops will remove the serialization errors as long as each phase only runs AFTER the previous phase is complete (and committed). The simplest way to insert a row in Redshift is to to use the INSERT INTO command and specify values for all columns. If something cannot be defined as N - 1 version then you may need to add a phase to the flow. No loops! If you need to use some later phase information is needs to be defined as N - 1 (previous data update) information.

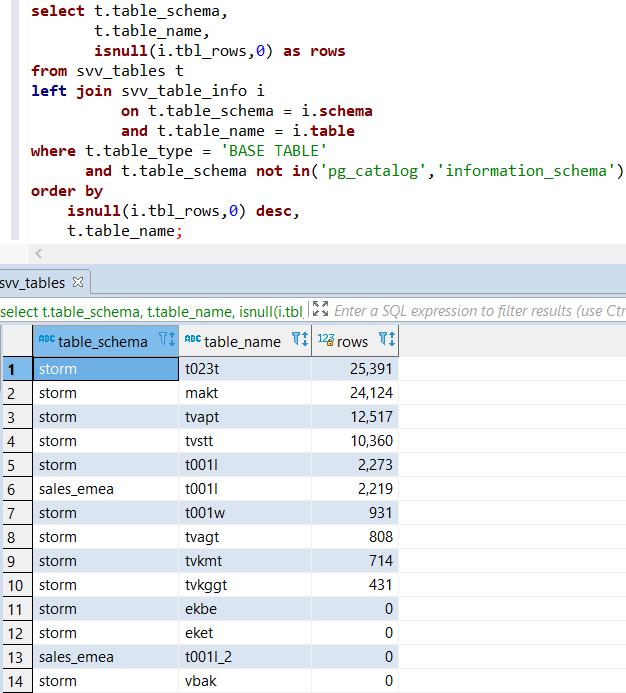

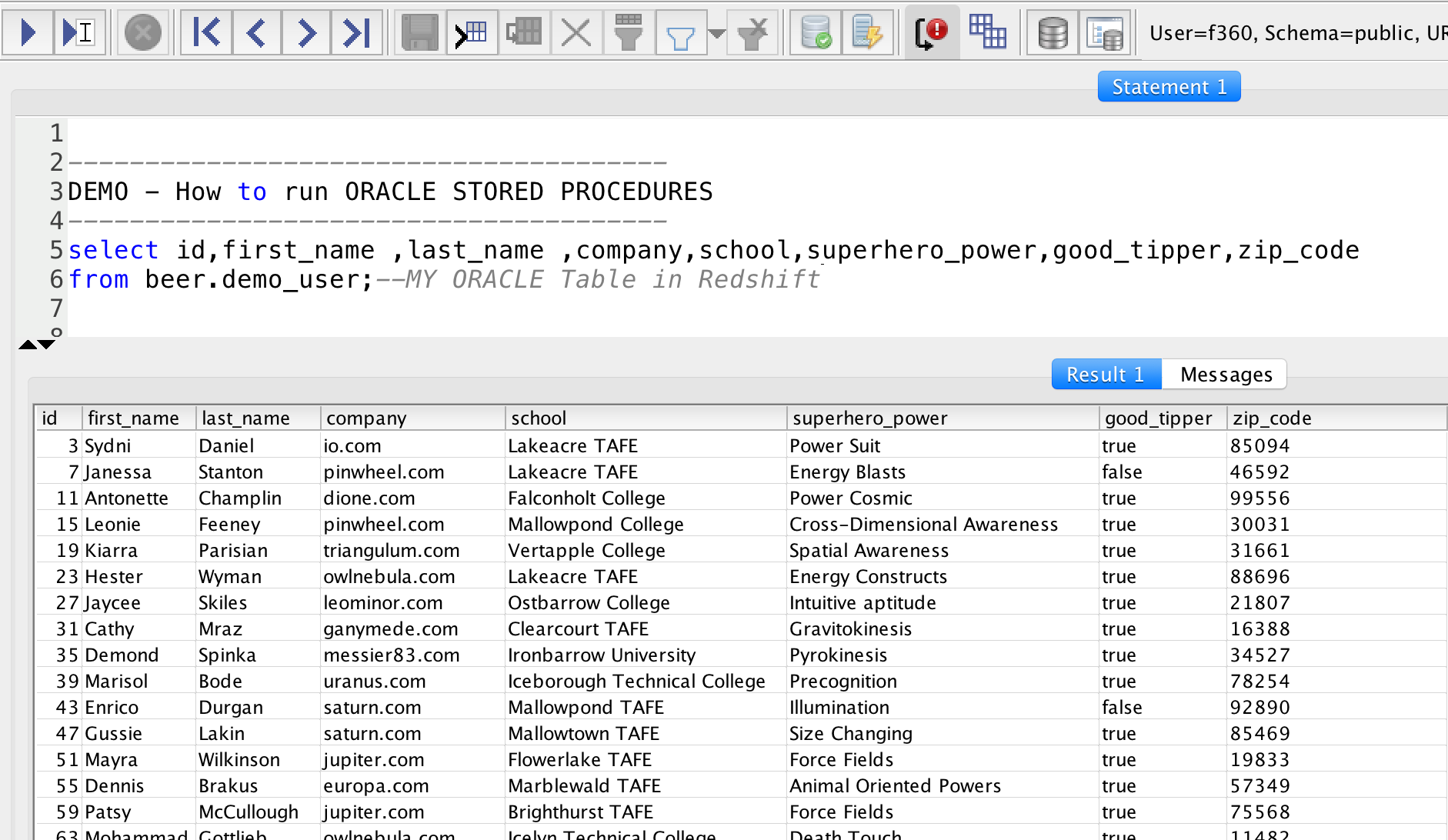

Redshift Spectrum scans the files in the specified folder and any subfolders. The external table statement defines the table columns, the format of your data files, and the location of your data in Amazon S3. What is important is that you define the linear flow of information for each update cycle. To define an external table in Amazon Redshift, use the CREATE EXTERNAL TABLE command.

These can depend on any previous phase of this cycle.Īgain you definition of phases can be different. marketing / Creating the 2 schemas with the data / CREATE / Give sales USAGE rights in schema, and read-only (SELECT) access to the tables within the. Phase 3 - update of derived / summary tables (phase 3 tables). These can only depend on Phase 1 or 0 tables for this cycle or if these updates need phase 2 or 3 information then these are defined as N - 1 versions of this data. Phase 2 - is the update of fact tables (phase 2 tables). If any of these phase 1 tables need information from other phase 1, 2, 3 (etc) tables then these need to be defined as information coming from the N-1, previous cycle of data update. Phase 1 - process & sanitize incoming data and apply this information to phase 1 tables. Phase 0 tables only depend on external data (and these may be temp tables in reality). This only depends on external information. (You phase definitions may vary but what is important is to think through these dependencies.)įirst, phase 0 - data load for this cycle (N). Let's define some phases of data update within Redshift for each update cycle. If you are getting serialization errors you have some circular dependencies that haven't been thought through (very common). You need to step back and look at the dependencies in your updates.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed